How Risk Scoring Helps Identify Escalation Patterns

How Risk Scoring Helps Identify Escalation Patterns

Most people hear "risk scoring" and picture a spreadsheet. A column of names, a column of numbers, a color-coded flag next to anyone over a certain threshold. Someone fills it out, someone files it, and the file sits in a shared drive until an incident forces it back into the conversation.

That is not risk scoring. That is documentation with math attached.

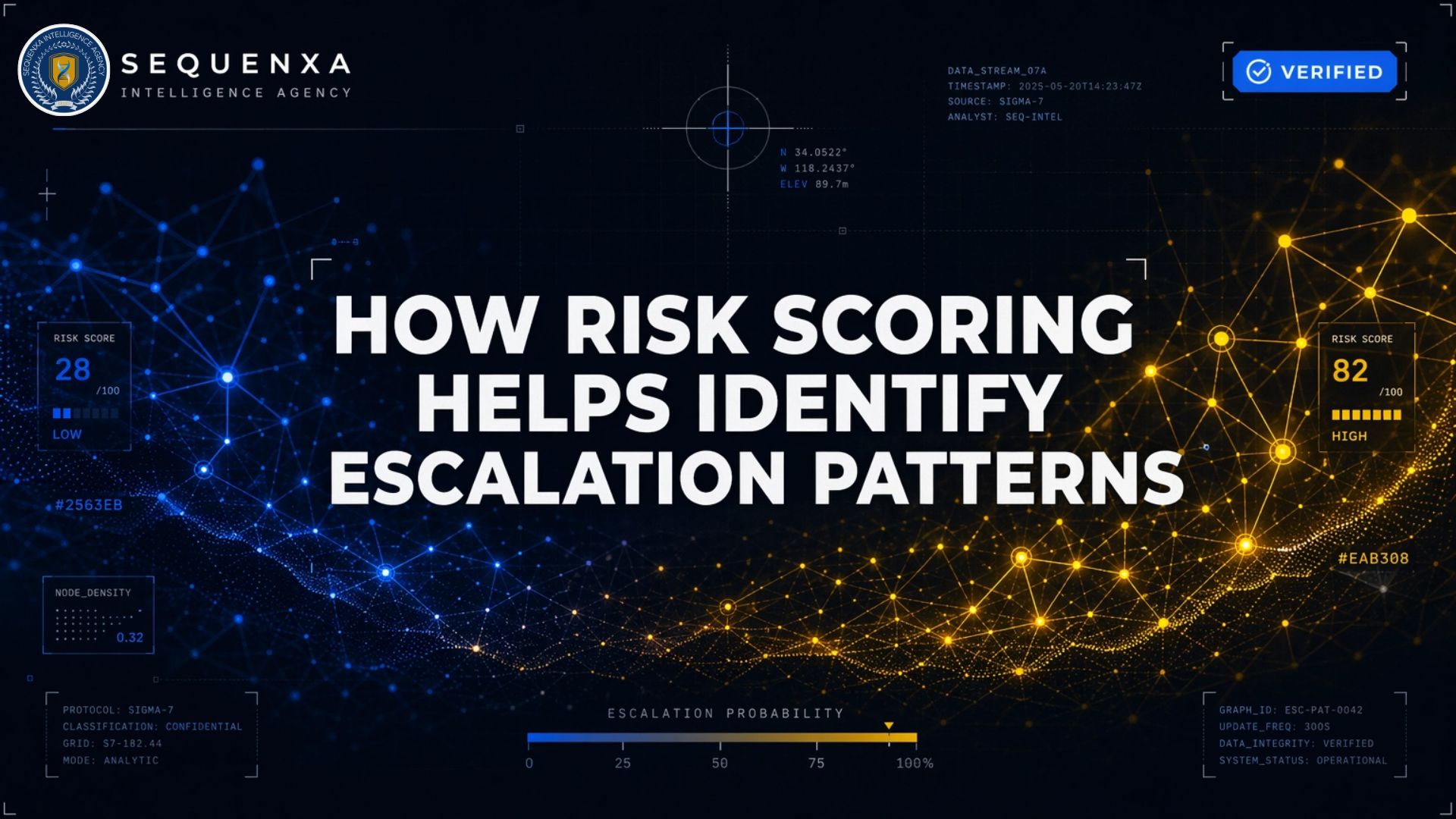

Risk scoring, done operationally, is a way of tracking movement. It assigns weights to specific behavioral and contextual indicators, aggregates them into a composite score, and watches how that score changes over time. In the threat assessment literature, this is the quantitative side of behavioral analysis, translating observation into something a team can actually compare across time and across cases. The number itself is not the point. The trajectory is. A static 4 out of 10 means something very different than a 4 that was a 1 six weeks ago and is climbing.

That trajectory is what escalation looks like before it has a name.

The gap between "having a score" and "seeing the pattern"

The FBI's Behavioral Analysis Unit has spent close to three decades studying mass attacks. The conclusion that surfaces in every major BAU study, restated in slightly different language each time, is the same: attackers do not snap. They progress along an observable pathway, and that pathway produces signals in the weeks and months before the act.

A BAU analysis of 59 active shooter subjects found that 100% had evidence of a grievance, 90% had evidence of violent ideation, 73% had evidence of intent to cause harm, and 62% had evidence of behavioral pathway actions, research, planning, preparation, breach. In 37% of cases, thought was observable even when physical pathway action was not.

The behaviors were there. In almost every case, someone around the subject noticed something.

The failure was not one of detection. It was one of correlation. An HR complaint in January, a social media post in March, a manager's offhand concern in May, a purchase pattern change in July, each individually explainable, each filed in its own system, each assessed by a different person using a different threshold. No one was watching the score move. There was no score to move.

That is the gap a functioning risk scoring system is supposed to close. It gives organizations a single, continuously updated view of how much concern a subject warrants, based on signals that would otherwise be distributed across files that never get compared. This is the same gap a real threat assessment process is meant to close, risk scoring is the quantitative layer underneath it.

What a risk score is actually measuring

A useful risk score combines three categories of input.

Static factors. Things that don't change much. History of violence, access to weapons, prior disciplinary events, known affiliations, relevant criminal record. These establish a baseline. They do not predict escalation on their own, most people with concerning histories never act, but they anchor the floor of the assessment.

Proximal warning behaviors. This is the research-validated core. The typology proposed by J. Reid Meloy and colleagues in 2012 identifies eight accelerating patterns: pathway, fixation, identification, novel aggression, energy burst, leakage, directly communicated threat, and last resort. The literature describes these as acute, dynamic changes in behavior, the kind that push a case out of "monitor" territory and into "manage" territory, even when the eventual target hasn't been named.

In a study of 111 lone-actor terrorists across the U.S. and Europe, 77 per cent or more evidenced four proximal warning behaviors: pathway, fixation, identification, and leakage. The same cluster has shown up across school shooters, public figure attackers, and workplace violence cases. The specific ideology changes. The behavioral signature does not.

Contextual stressors. Job loss, relationship breakdown, financial collapse, legal trouble, recent humiliation, a perceived injustice that has not been resolved. These don't cause violence on their own. They intensify everything else. A preoccupation that was manageable before the divorce is no longer manageable after it, and the trajectory changes.

A risk score weights these three inputs, sums them, and produces a number. But the number by itself is the least interesting part of the system.

Why static scoring misses escalation

Here is the part that should bother anyone running one of these programs: a score captured once and never revisited is not a risk score. It is a snapshot of a moment that has already passed.

Financial compliance literature has been making this point for years. A model that scores someone at onboarding and then leaves the score alone cannot see behavior change, because it is not looking. The same applies to insider threat programs, workplace violence programs, and any system meant to detect targeted aggression.

Escalation is defined by change, not by state. A subject who has been at a 3 for two years is managing. A subject who was at a 1 in March and is at a 3 in July is accelerating. The second scenario is far more dangerous, and a static scoring system cannot tell them apart.

The research makes this explicit. In the attacker samples, the proximal warning behaviors show up bunched together, several at once, reinforcing each other. In the samples of people who raised concern but never acted, the same behaviors appear scattered, isolated, not clustering. The signal that separates a case requiring active management from one requiring monitoring is not the presence of any single indicator. It is the co-occurrence and acceleration of several at once.

An effective system therefore has to do three things most programs don't. Scores have to be revisited on a schedule, not only when an incident forces a review. Rate of change matters at least as much as whether a threshold has been crossed, and the system should flag acceleration before it flags level. And the inputs have to be correlated across data sources that normally live in separate systems owned by separate teams.

Most internal programs do none of these well. That is why the gap exists.

The eight warning behaviors, in practice

The Meloy typology sounds abstract until you translate it into what the signals actually look like in a case file. Here is what a trained analyst watches for, and what each behavior contributes to the score.

Pathway. Research, planning, preparation, or implementation of an attack. Reconnaissance of a target location. Dry runs. Acquisition of weapons or materials outside prior patterns. This is the highest-weight behavior because it directly postdicts action. When pathway shows up, monitoring becomes active management.

Fixation. A preoccupation with a person, a cause, or a grievance that intensifies and is accompanied by a deterioration in social and occupational functioning. Fixation is often the first proximal warning behavior to appear. It is also the one most often dismissed, because it looks like obsession, and obsession looks like someone having a bad stretch.

Identification. A psychological shift in how the subject sees themselve, adopting a warrior identity, associating with past attackers or movements, acquiring the aesthetics and paraphernalia of violent actors. The behavioral research shows a specific predictive move: fixation is what I think about all the time, identification is who I become. That transition is a scoring trigger.

Novel aggression. An act of violence by someone with no prior history of it, carried out as a test. A first punch. A first threat made in public. A first act of intimidation that crosses a line the person had not previously crossed. In workplace settings, this is often the moment HR finally opens a file, long after the behavioral pattern should have triggered one.

Energy burst. A sudden increase in activity related to the target or the grievance, communications, travel, research, reconnaissance. Often clustered in the days or weeks before an attack.

Leakage. Communication to a third party of intent to harm. Posted online, said in a text, disclosed to a family member, mentioned in a manifesto draft. Leakage is present in the majority of targeted violence cases, which is why bystander reporting is so often the difference between intervention and post-incident review.

Directly communicated threat. A threat made directly to the target or to law enforcement. Present less often than most people assume. Its absence is not protective.

Last resort. A signal that the subject has concluded violence is the only remaining option. Farewell behaviors, giving away possessions, writing a statement meant to be found after the event.

Each of these carries a different weight in a well-built scoring model. Pathway and last resort weight high. Fixation weights moderate at baseline and high when combined with identification. Energy burst is a velocity multiplier more than a standalone score.

A case might show three of these at low intensity and still warrant active management, because the combination is the signal. The total is not the sum. The pattern is.

What risk scoring actually catches that humans miss

There is a version of this argument that presents risk scoring as a replacement for human judgment. That is wrong. Structured scoring augments judgment. It does not replace it.

Here is what it does better than unaided review.

Aggregation across time. A manager reading a single concerning email in isolation sees one incident. A scoring system layered across communications, HR files, access logs, and public records sees that this is the fifth qualifying event in twelve weeks, and the third in the last four. The manager is reading one data point. The system is reading a slope.

Aggregation across sources. The IT logs show unusual after-hours database access. The HR file shows a recent formal complaint. The physical security log shows the subject entered a part of the building they have no business in. Social media monitoring flagged a post that met the leakage threshold. Any one of these in isolation is explainable. Together they are a case. Nothing in a typical organization correlates them. A scoring system does.

Resistance to narrative bias. Investigators, managers, and colleagues all want a concerning person to turn out not to be concerning. That bias isn't corruption. It's the default psychological response of people who like the person they're assessing, or who don't want to believe they work next to a threat. A scoring system has no social relationship with the subject. It reads the signals as signals.

An audit trail. When a case escalates to intervention, or, worse, when one is missed and has to be reviewed after the fact, the question is always the same: what did we know and when did we know it. A scoring system answers that question with a timestamped log of inputs, weights, and score changes. A shared inbox and a few loose notes don't.

Where risk scoring fails

It fails when it is treated as a product instead of a process.

The most common failure mode is narrow sourcing. A program that pulls from HR alone, or IT alone, or open-source monitoring alone, will miss the pattern every time, because warning behaviors don't respect organizational silos. Pathway can show up in a parking garage; leakage can show up on a Discord server; fixation can show up in a Slack channel no one reads. A score that only sees one domain is a score that confirms what that domain already knew.

Risk scoring also fails when the thresholds are tuned to reduce false positives instead of to detect acceleration. A system optimized for quiet is a system that will be quiet when it shouldn't be. False positives cost an afternoon of analyst time. False negatives cost the thing the system exists to prevent.

And it fails when the score isn't wired to anyone who can act. A number that updates in a dashboard nobody watches, or that feeds into a workflow that requires six approvals to act on, is a number that will be discussed at the post-incident review.

Finally, and this is the one most worth saying out loud, it fails when the organization running it confuses measurement with prevention. A risk score is a decision-support tool. It tells you where to look harder and when to intervene. It does not tell you what to do about the underlying grievance, and it does not substitute for the human work of engagement, support, referral, and, when lawful and necessary, restraint.

Our Predictive Threat Intelligence division builds and operates these scoring systems, the early warning systems that sit underneath a real threat assessment function, for organizations that have accepted what the research has said for two decades: targeted violence is preceded by observable behavior, and those behaviors accelerate in patterns that can be detected if anyone is watching the score move.

The uncomfortable part

Most organizations that think they have a risk scoring program have a list. A list is not a score. A list is a set of names someone once worried about, frozen at whatever moment of concern got them on the list, never updated, never correlated, never reassessed.

Meanwhile, the person whose score should be climbing is not on the list, because the first indicator that would have flagged them showed up in a system nobody correlates with the list.

That is how the gap between detection and action becomes the gap between a file and an incident.

The fix is not a better spreadsheet. The fix is a scoring system that captures inputs from every relevant domain, updates continuously, weights behaviors the way the research says to weight them, and routes changes in trajectory to someone with the authority to act. Most organizations don't have that. The ones that do tend not to make the news.

That is the outcome the system is designed to produce. Nothing happens. Nobody hears about it. And the score that was climbing stops climbing, because someone intervened while intervention was still an option.

Ready to Take the Next Step?

Learn how Sequenxa can help protect your organization with intelligence-driven solutions.

Get Started